The year 2025 was one of extraordinary opportunity and heightened responsibility for courts worldwide. The justice sector continued its rapid digital evolution, navigating a landscape shaped by synthetic media, escalating cybersecurity threats, and a fast-maturing technology ecosystem—one in which AI is no longer experimental, but increasingly embedded into daily operations. At the same time, expectations around reliability, transparency, and the integrity of the official record reached an all-time high.

Below are the key trends that defined the year—and will continue shaping justice technology throughout 2026.

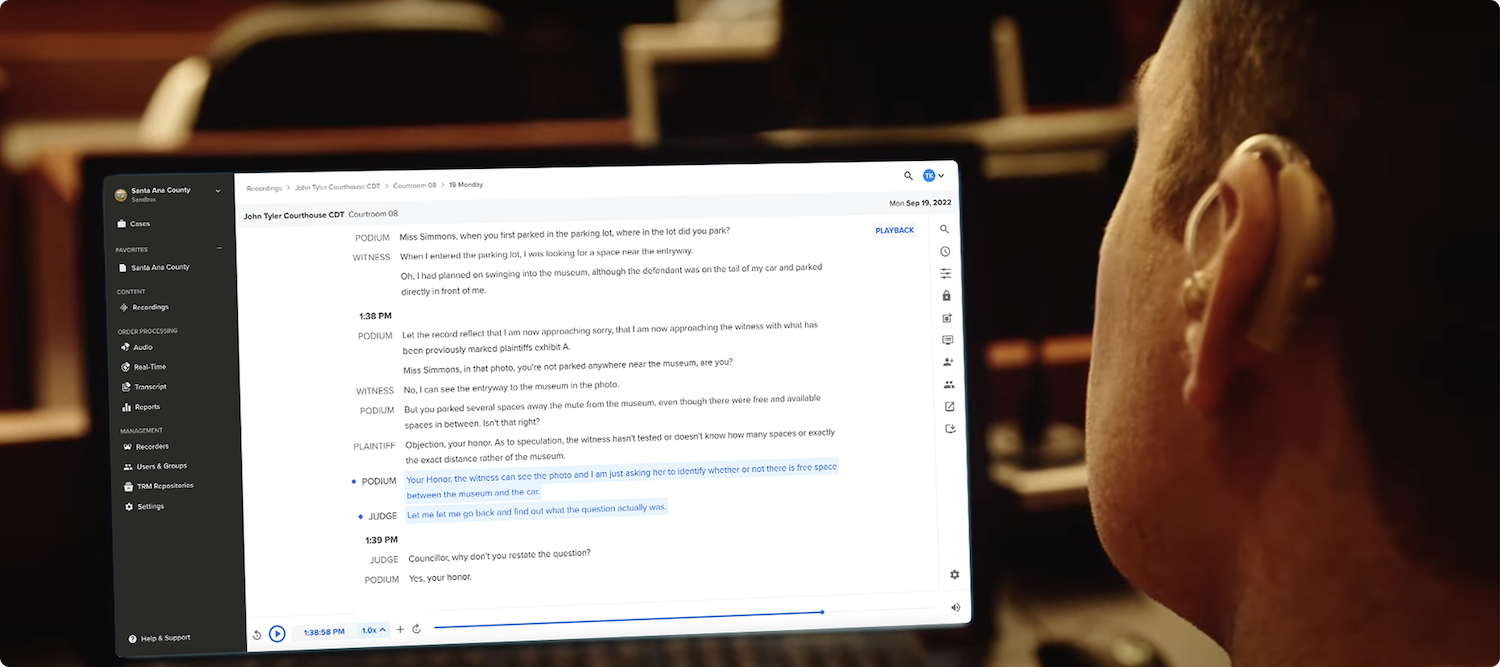

The rise of hyper-realistic synthetic media has reshaped the evidentiary landscape. Deepfakes introduce real risks for externally sourced evidence—but they do not undermine the integrity of court recordings, which are created within closed, end-to-end systems protected against tampering.

This distinction between evidence and court recordings is crucial. Third-party videos, phone recordings, and even body-worn camera footage often lack a guaranteed provenance chain. Meanwhile, courtroom recordings are captured, transferred, and stored within sealed environments, where manipulation isn’t possible.

This year brought:

Rather than reducing trust in digital recording, deepfakes highlight the need for verified digital evidence. The real challenge lies with external media entering the courtroom—not with the court’s own official record. Court-grade recordings are created within controlled, closed systems where capture, transfer, and storage are secured end-to-end, making them inherently resistant to tampering and still essential for accessible, comprehensive proceedings.

What deepfakes should prompt is faster adoption of stronger integrity measures for evidence: authenticated capture, cryptographic signatures, digital provenance tracking, advanced forensic analysis tools, stricter chain-of-custody procedures, and ongoing judicial training to help legal professionals identify manipulated media.

Courts have become increasingly attractive targets for ransomware, data theft, and disruption. Security has shifted from something handled “in the background” to something that has to be built into everyday operations.

Key developments included:

It’s no longer enough for vendors to simply meet basic compliance checkboxes. Courts now expect partners who follow recognized security frameworks—the National Institute of Standards and Technology (NIST), Service Organization Control 2 (SOC 2 Type 2), Criminal Justice Information Services (CJIS), and UK Cyber Essentials to name a few—and can demonstrate that their systems hold up under pressure.

A graph comparing For The Record’s security framework controls against top competitors

But there’s also a growing awareness that security can’t stop at the vendor boundary. Courts themselves are strengthening their own practices: tightening access controls, improving internal network hygiene, and treating security as an ongoing responsibility rather than a periodic audit.

AI adoption in the justice sector shifted into a more grounded phase in 2025. Courts moved past experimental pilots and hype-driven narratives, toward practical, human-supervised applications that meaningfully support judicial workloads.

This year saw:

Courts aren’t resisting AI—they’re refining it. The sector is moving toward responsible, explainable, and purpose-built AI that improves efficiency without compromising impartiality or due process.

If 2024 accelerated digital transformation, 2025 raised expectations across every dimension—security, authenticity, resilience, and purpose-driven innovation.

In 2026, we expect to see:

The justice sector is not just modernizing—it is reframing what trustworthy, resilient, and technologically sound digital justice must look like for years to come.